Nowadays we work on all kinds of applications, from CMS, Symfony framework to eCommerce applications (like Magento 2) and everything in between. I’d like to come back on one of the most popular CMS applications we encounter: Drupal, and how we managed to deliver an easily scalable and modular configuration for one of our customer.

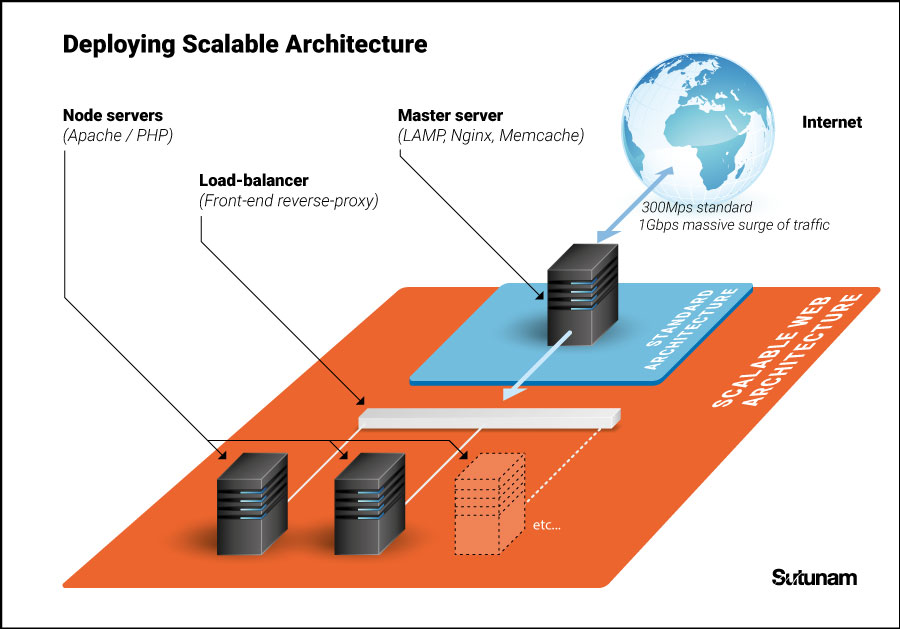

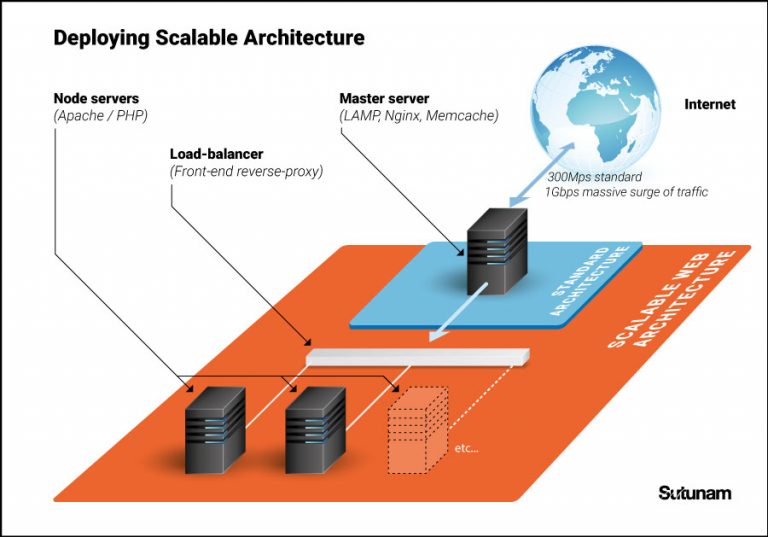

It is the second year, the team behind http://www.tousaurestaurant.com/ works with us, Sutunam, to optimize their Drupal due to their high-traffic peak expectation. The end result is a Drupal site using load balancers and cloud servers running on LAMP images and using Nginx for the front-end reverse-proxy server. Using a reverse-proxy is helpful when the demands of serving a single website outgrow the capabilities of a single machine, it caches the unexpired contents as your browser.

The Architecture and Servers

First thing is to build the cloud environment. For this special case we use the following configuration:

- 1 master server, with Debian LAMP (Apache2, MySQL and PHP) with Nginx which is the main web front-end reverse-proxy, used as load-balancer.

- A number of node servers working as slaves with Apache and PHP

- (1 files container using the CDN – optional)

The master server

We run a LAMP environment on the main server (Front-end proxy) with Nginx and Memcache. This server works like a proxy and will be the main server during the year.

For special occasion like media broadcast, the server Nginx works as a reverse-proxy and load balancer. Nginx first cache all static content to speed up your website and will later balance the load between all your servers.

Drupal pages and content are also configured and optimized for the caching.

The nodes servers

Node serves run PHP processes. Beside Apache and PHP, the Drupal source code is duplicated on each nodes from the master server every 10 seconds max.

Every changes of file system inodes are listened through inotify feature of Linux kernel, and with an algorythm provided by lsyncd only modified files will be sent by SSH, using librsync.

Theses servers do not have MySQL and then directly request the MySQL and Memcache applications on the master server.

How it works ?

Front-end proxy service with Nginx will work follow the process:

- Nginx receives a request (from the Internet)

- Nginx checks if there is an existing cache for the request, else sends a second request to any of the nodes servers, balancing the load, and gets a response

- Nginx returns the response of that request to the original requester

Scaling

To be able to scale quickly, an image of the node server is created. A deploy script is developed to synchronize files, configure firewall, create config files, and adding the newly created node server to Nginx and to the lsyncd process. Once Nginx is reloaded, the node server is deployed and ready to respond to requests.

This way adding a new node server take less than 60 seconds. It automatically detects the number of cores of the new servers, and assigns t0 it the right weight for the Nginx load-balancer.

Limitations

To speed up to the maximum, the Drupal applications on the nodes servers do not communicate with the Memcache on the master server. They will use their own Memcache application to be faster. In this way we save 120ms per request. However, Drupal doesn’t allow yet to duplicate on multiple caches thus when changes are made to the content from the admin located on the master server, the cache stored on the nodes servers is not removed .

Another limit is the the Mysql server on the master. This is the limit of this architecture because it cannot handle unlimited requests as it is not replicated.

Results

With this configuration, Drupal is set up as a traffic-killer in a multiple-server environment, easily scalable. We handled 13000 concurrent users / visitors (as Google Analytics unit) on 13 node servers with 32 core/128GB RAM. We successfully served around 500k pages in one hour to 100k visitors.

If you are expecting a surge in your website traffic or if you have questions, do not hesitate to contact us or check out our Hosting services at Sutunam.